How AI Helps Reduce Bias in Video Interviews & Skill Assessments

Interviews are prone to subjectivity and bias, whether virtual or in-person. From how a candidate looks on the camera to their background, and how they speak or how quickly they can “click” with the interviewer, unconscious cues can skew human judgment.

Decisions are often shaped by memory, pressure, and instinct, without even realizing it. But hiring bias doesn’t always come from people; it can come from inconsistency. For instance, when different candidates are asked different questions or when different interviewers evaluate differently.

Despite the best intentions, human recruiters are subject to biases. As hiring scales, these inconsistencies multiply. Research increasingly shows that traditional hiring processes, while human, are often subjective, inconsistent, and difficult to scale fairly.

This is why organizations are shifting toward more structured, data-driven approaches to hiring. Video interviews and AI-supported assessments are part of that shift. Not because they remove human judgment, but because they make decision-making more consistent, transparent, and grounded in evidence.

At the same time, there’s still scepticism around whether AI actually reduces bias or simply amplifies it. Today, AI-driven recruitment platforms are assisting as a bias-mitigating engine and a compliance necessity, making hiring more structured and data-driven.

What Bias in Hiring Looks Like?

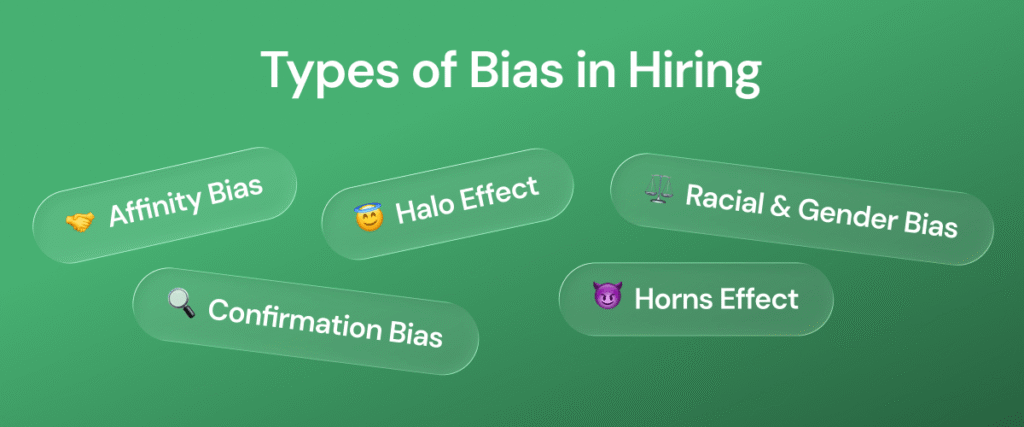

While most hiring teams aim to be fair, bias rarely shows up as an obvious decision. It appears in small, everyday moments. Sometimes, it looks like a strong first impression. Sometimes it’s a preference for candidates who feel familiar. And sometimes it’s simply an inconsistency in how candidates are evaluated. Some common types of bias in hiring include:

Affinity Bias: Favoring candidates who share similar interests, experiences, or backgrounds (e.g., attending the same university).

Confirmation Bias: Forming an early, often subjective, opinion of a candidate and only seeking information that confirms that belief.

Halo Effect: Letting one positive trait influence the entire evaluation, with the assumption that the candidate is excellent.

Horns Effect: Letting one negative trait (e.g., a bad first impression) overshadow the positive qualities and qualifications.

Name/Racial/Gender Bias: Disfavoring candidates based on their name, ethnicity, or gender, often resulting in fewer interview callbacks for minority candidates.

Traditional hiring relies heavily on human judgment, intuition, and experience. While valuable, this also makes the process vulnerable to subjectivity and unconscious bias, especially at scale. These may seem minor, but they can significantly impact who moves forward and who gets overlooked.

Why Minimizing Bias Matters in Hiring?

When bias goes unchecked, it affects both hiring outcomes and candidate experience. For organizations, it can lead to:

- Missed talent due to subjective decisions

- Less diverse teams and narrower perspectives

- Lower retention when employees feel overlooked

For candidates, it often means:

- Fewer opportunities to demonstrate real skills

- Decisions based on impressions rather than ability

Reducing bias in the hiring process is not only about fairness; it directly affects hiring qualities and long-term business outcomes.

Why Traditional (In-person & Video Meetings) Interviews Can Amplify Bias

Traditional interviews, like in-person interviewing or a video meeting over Zoom are unstructured by design, and that’s where bias finds room to grow. Hiring decisions are influenced by perception, experience, and intuition. While this allows for context-specific evaluation and interpersonal assessment, it makes the process vulnerable to subjectivity, inconsistency, and limited scalability.

When the interview process is unstructured, and interviews are conducted differently for every candidate, fairness becomes difficult to maintain.

- Different questions lead to incomparable answers.

- Real-time conversations introduce pressure and quick judgments.

- Memory-based evaluation makes objective comparison difficult.

- Interviewer variability means each candidate is assessed differently, leading to inconsistent decision-making.

Manual screening and unstructured interviews are not only time-consuming but also less reliable in aligning candidate skills with job requirements.

Bias doesn’t need intent to exist in this environment; it only needs inconsistency. And when hiring decisions are made based on fragmented conversations and subjective recall, even the most qualified candidates can be overlooked.

How Pre-Recorded Video Interviews Enable Objective Decision-Making

Structured video interviews, such as pre-recorded or one-way video interviewing, change how candidates are evaluated by introducing consistency, comparability, and clarity into the process.

1. Standardized Questions Across All Candidates:

Every candidate responds to the same set of role-specific questions and is evaluated with the same criteria.

This ensures:

- Interviewers focus on job-relevant criteria

- Evaluations are based on comparable inputs

- Decisions are less influenced by personal bias

When the process is standardized, objective decision-making becomes more achievable.

A structured hiring approach doesn’t just improve consistency; it also helps organizations create a more measurable and scalable recruitment process. Every stage, from sourcing to interviewing and final selection, becomes easier to evaluate when workflows are clearly defined. Understanding the complete Recruitment Cycle is essential for companies aiming to reduce bias, improve candidate experience, and make better hiring decisions. When recruiters follow a standardized recruitment framework supported by video interviews and skill assessments, they can focus more on candidate capabilities instead of subjective impressions, leading to fairer and more data-driven hiring outcomes.

2. Asynchronous Interviews Reduce Pressure Bias

Candidates can record responses on their own time, without the pressure of a live interview.

This helps:

- Reduce snap judgments based on nervousness

- Allow candidates to present more thoughtful responses

- Shift focus from performance under pressure to actual content

3. Replay-Based Evaluation Improves Accuracy

Unlike traditional interviews, video responses can be reviewed multiple times.

This allows hiring teams to:

- Revisit responses instead of relying on memory

- Compare candidates side-by-side

- Make more consistent and evidence-based decisions

Research-backed hiring increasingly emphasizes evidence over intuition, and recorded interviews support exactly that.

4. Multiple Reviewers Reduce Individual Bias

Structured video interviews allow multiple stakeholders to evaluate the same responses independently, which:

- Reduces reliance on a single perspective

- Balances subjective opinions

- Leads to more consistent outcomes

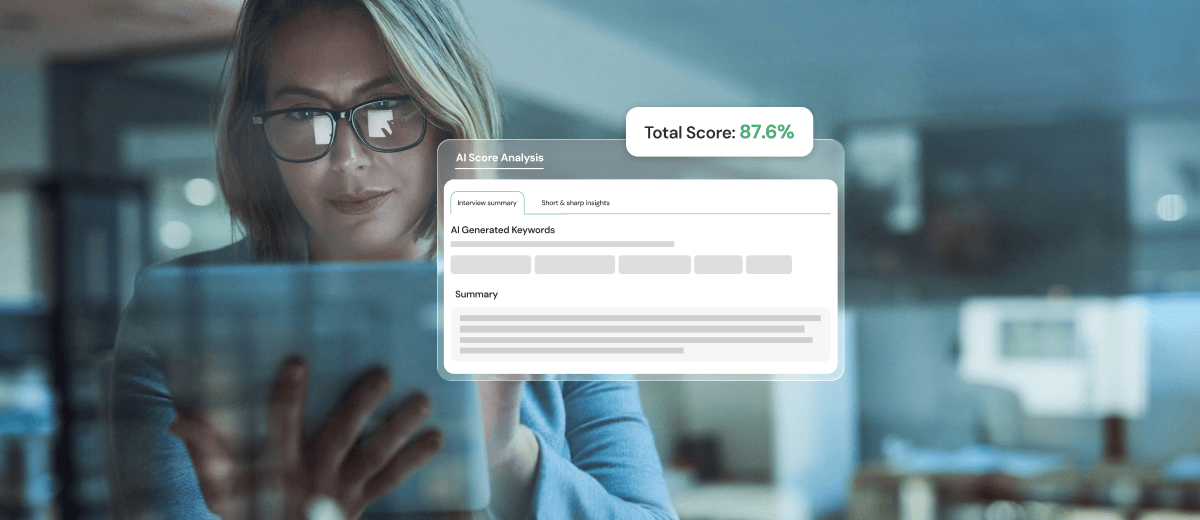

Where AI Supports Fairer Hiring Outcomes

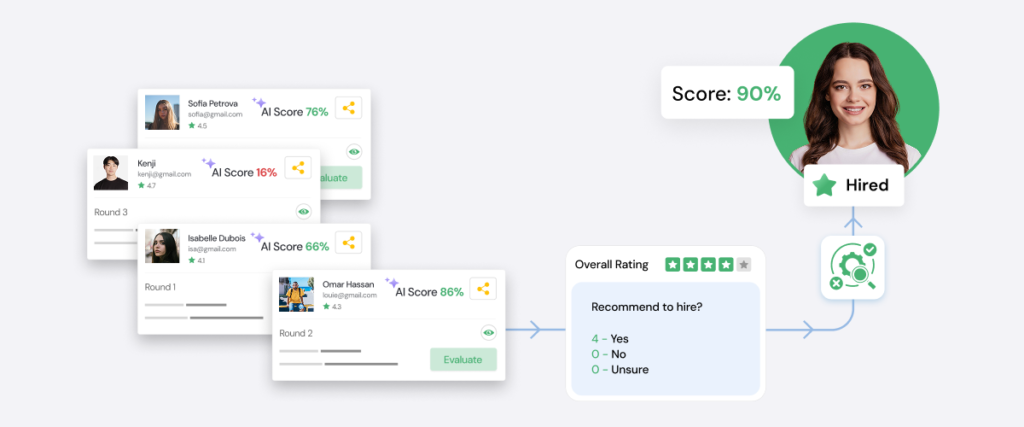

AI plays a supporting role in making hiring more consistent, scalable, and data-driven. When applied thoughtfully, AI helps:

- Standardize evaluation criteria across large applicant pools

- Analyze patterns in candidate data to improve matching accuracy

- Support objective decision-making by focusing on skills and role fit

Some platforms also support anonymized screening in early stages, helping reduce the impact of identifiable information.

AI-enabled recruitment systems can significantly improve hiring accuracy, quality, and bias reduction compared to traditional methods. But AI is not a replacement for human judgment; it’s a system that strengthens it.

The Role of Scrutiny in AI-Driven Hiring

AI can reduce bias, but only when it is actively monitored and evaluated. Without proper scrutiny, AI systems may reflect past decisions and repeat the same patterns at scale, or be programmed to reject or approve candidates automatically with no human oversight.

Research highlights a critical point: lack of algorithm transparency increases the risk of embedded or amplified bias.

Responsible AI use requires:

- Continuous monitoring of outcomes

- Regular audits of algorithms and decision patterns

- Diverse and representative training data

- Clear visibility into how decisions are supported

- Human oversight

AI should not operate as a black box. The more scrutiny applied to AI systems, the more reliable and fair the outcomes become.

Why Transparency and Candidate Consent Matter

As AI becomes part of the hiring process, transparency is essential. Candidates should be clearly informed about:

- When AI is used in evaluations

- How their data is being processed

- What criteria are used to assess their responses

Transparency builds trust and supports compliance with evolving regulations. Equally important is candidate consent. When candidates understand and agree to how their data is used, it creates a more ethical and accountable hiring process.

Because fairness in hiring isn’t just about outcomes. It’s about how those outcomes are achieved and communicated.

Where Bias and Subjectivity Can Still Sneak In

AI does not automatically eliminate bias; it can even amplify it if models are trained on biased historical data or poorly calibrated. Studies on AI‑driven recruitment highlight several recurring issues:

Historical Bias in Training Data: If past hiring decisions favored certain demographics, AI models can learn to replicate those patterns as “success signals.”

Signal Misinterpretation: Factors like speech rhythm and eye contact may be misread as proxies and incorrectly used as performance indicators.

Lack of Oversight: Fully automated decisions without human review or audit trails can entrench hidden biases.

Research on AI‑powered video platforms like HireVue and similar tools warns that algorithmic fairness and transparency are prerequisites, not optional add‑ons.

Best Practices for Fair and Responsible AI in Hiring

Reducing bias requires a governance framework and clear operational design. Some best practices include:

Regular Audit & Fairness Metrics: Organizations are encouraged to periodically run bias audits using frameworks similar to the counterfactual methods proposed for video‑interview models. These audits can track differences in scores across gender, ethnicity, age, and other groups.

Candidate-centric Interface & Transparency: How candidates interact with AI (chatbots, video prompts, feedback loops) shapes how they present themselves and whether they feel they’re being treated fairly. Clear explanations of what AI evaluates and how it can reduce anxiety and “gaming” the system.

Skills-forward Design: Platforms that prioritize task‑based assessments (e.g., coding tests, case‑study responses, structured situational judgment tests) over purely behavioral or “vibe‑based” scoring tend to produce more objective outcomes.

Modern hiring requires more than resumes and conversational interviews. Structured Skill Assessments help organizations evaluate candidates based on real abilities rather than assumptions or first impressions. By testing job-specific competencies through coding tests, aptitude evaluations, situational judgment exercises, or technical assessments, recruiters gain measurable insights into candidate performance. This approach reduces reliance on subjective evaluation methods and creates a more standardized hiring process. When combined with AI-supported video interviews, skill-based hiring enables teams to identify qualified candidates more accurately while improving fairness, consistency, and overall hiring quality across different roles and departments.

Keep Human-in-the-loop: AI should typically assist human decision‑making, especially in final‑round or high‑stakes interviews. HR or hiring managers can review AI scores, override them when needed, and note patterns that look suspicious.

Turning Subjective Hiring into Structured, Fair Decisions

AI-driven video interviewing and assessments, when designed with structure and transparency, can significantly reduce the noise and inconsistency that allow bias to thrive. These formats are a way to turn subjective, memory‑driven hiring into a fairer, more evidence‑based system that benefits both candidates and organizations.