Human-Centered AI in Hiring: Keeping People in Control in the Age of Automation

Leaders across industries are recognizing that real transformation is rooted in people and culture. As the World Economic Forum emphasized, successful AI adoption depends not on deploying more technology, but on empowering people to work with it.

In hiring, this distinction matters. The future does not belong to the most complex algorithm. It belongs to human-centered AI. Artificial Intelligence is quickly becoming the backbone of modern talent acquisition, shaping how organizations approach hiring at scale.

At the same time, scrutiny around AI in employment decisions is intensifying. Regulators are introducing audit requirements. New AI policies are emerging across jurisdictions. Candidates are questioning algorithmic fairness and demanding greater transparency.

This environment is forcing a philosophical shift. Organizations are beginning to distinguish between two types of AI in hiring:

- AI designed to predict and decide

- AI designed to support and inform

This distinction defines the rise of human-centered AI.

What is Human-Centered AI in Hiring?

Human-centered AI is designed to enhance human judgment, not replace it. Traditional automation focuses on removing manual effort and maximizing efficiency. Human-centered AI, by contrast, focuses on clarity, accountability, and collaboration between humans and systems.

In hiring, the difference looks something like this:

| Traditional Automation | Human-Centered AI |

|---|---|

| Replaces tasks | Augments decision-making |

| Transactional candidate interaction | Meaningful candidate-centric engagement |

| Predicts outcomes | Provides actionable insights |

| Black-box scoring | Transparent outputs |

| Reduces human involvement | Keeps humans in control |

| Impersonal workflow | Personalized experiences at scale |

Hiring is not just a workflow. It shapes livelihoods, careers, and company culture. The stakes demand a higher standard.

Why Hiring Requires a Different Approach to AI?

Hiring decisions directly impact business outcomes, organizational culture, and long-term workforce growth. This brings legal accountability, reputational risks, ethical responsibility, and regulatory scrutiny.

Today’s hiring technologies operate within expanding frameworks such as GDPR, CCPA, EEOC guidelines, the ADA, New York City’s Automated Employment Decision Tools Law (AEDT), and Illinois’ Artificial Intelligence Video Interview Act (AIVIA). Globally, the EU AI Act signals even greater emphasis on transparency and explainability in employment-related systems.

In the rush to automate recruitment, parts of the industry have leaned heavily into complex algorithmic models, systems that ingest large volumes of data and generate rankings or scores without clearly explaining how those conclusions were reached.

While sophisticated modeling can provide analytical depth, opacity introduces risk. When outputs cannot be easily explained to candidates, recruiters, or regulators, trust erodes. This leads to the very things the market fears most: AI bias, opacity, and dehumanization.

Two AI Philosophies in Talent Technology

1. Predictive, Model-Heavy AI

Some platforms prioritize deep predictive analytics, behavioral modeling, and algorithmic ranking. These systems aim to identify patterns, forecast performance, and automate candidate comparison at scale.

The strength of this approach lies in advanced modeling. The risk lies in opacity.

When personality scores, predictive outputs, or automated rankings cannot be clearly explained to candidates or regulators, trust erodes. Over-automation can also reduce the space for human nuance: intuition, empathy, and contextual judgment.

2. Transparent, Assistive AI

An alternative philosophy focuses on AI as a support system. Instead of predicting who should be hired, it helps ensure:

- Structured, consistent interviews

- Clear documentation

- Fair evaluation standards

- Human final decision-making

This approach recognizes that AI can improve consistency and reduce administrative burden, while humans remain accountable for hiring outcomes.

How Jobma Applies Human-Centered AI in Practice

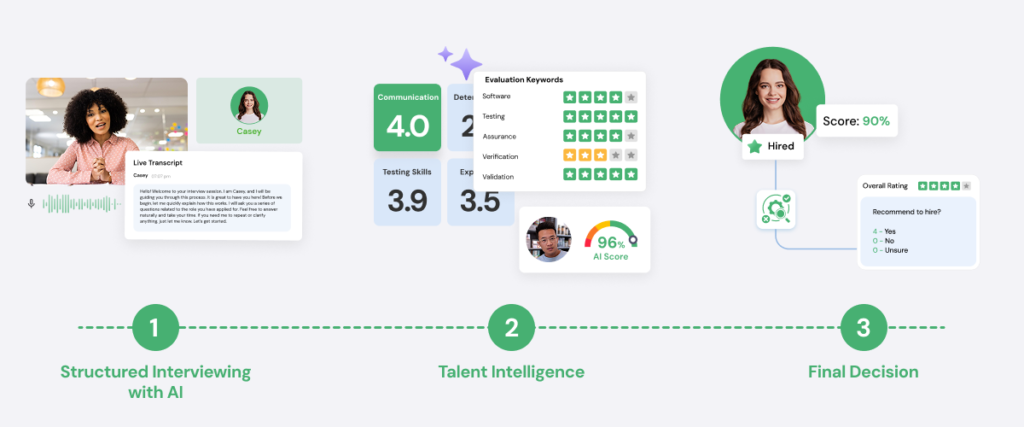

Jobma’s AI solutions are designed to operate as an assistive tool, not a decision-maker. Every insight is transparent, ethical, explainable, and can be reviewed by a human before any decision is made. We focus on “Glass Box” transparency.

This approach reflects a belief that structured intelligence strengthens human judgment, while preserving accountability. Below is how that philosophy translates into platform design.

Structured Interview Intelligence: Jobma uses AI to improve the quality and consistency of interviews. This includes resume parsing to extract relevant experience, competency-based organization of candidate responses, automated interview summaries to support reviewer alignment, and structured evaluation templates that reduce subjective bias.

The system does not automatically approve or reject candidates, generate opaque personality scores, or replace the hiring manager’s discretion. AI outputs are assistive, and the final hiring authority remains human. This ensures both fairness and defensibility.

Transparent Documentation & Audit Readiness: Human-centered AI requires traceability. Jobma provides time-stamped interview transcripts, replayable video responses, structured scoring documentation, and centralized evaluation records.

The interview transcripts generated from audio or video responses create documented, timestamped records that multiple reviewers can assess. This preserves context, supports calibration across hiring panels, enables auditability, and reduces reliance on memory or impression-based feedback.

These features create a defensible audit trail. If questions arise, internally or externally, decisions can be reviewed with evidence. Transparency protects both candidates and organizations.

AI-Assisted Interview Integrity: Asynchronous hiring presents challenges around fairness and process consistency. Jobma incorporates non-invasive monitoring features that can detect behaviors such as browser tab switching during interviews, multiple faces appearing on screen, and disruptions that may compromise interview integrity.

These tools operate strictly within the session itself and do not capture unrelated data or store biometric identifiers. They are designed to maintain fairness across candidates and enhance trust in remote environments, not for surveillance.

Candidate Verification: To deter proxies and AI-assisted fraud, Jobma includes verification steps that confirm the interviewee’s identity. Enhanced ID verification allows candidates to validate themselves directly within the interview workflow, ensuring fairness and accountability throughout the hiring process.

Surfacing Pattern with Human Oversight: Language analysis tools can highlight grammar patterns, fluency indicators, or response structure when communication skills are relevant to a role. These systems do not label answers as “good” or “bad.” They surface observable patterns aligned with defined competencies. But the interpretation and final decision remain human.

Bias Reduction Through Standardization: Rather than relying solely on algorithmic correction, Jobma emphasizes structural bias mitigation through standardized question workflows, consistent question rubrics, and panel review capabilities. Bias reduction is approached as a workflow design challenge, not merely a data science problem.

Privacy, Consent, & Global Compliance: Human-centered AI must be backed by strong governance. Jobma aligns its practices with global privacy frameworks such as:

- General Data Protection Regulation (GDPR)

- California Consumer Privacy Act (CCPA)

- EEOC and ADA considerations

- New York City AEDT requirements

- Illinois AIVIA disclosure standards

Key safeguards include:

- Candidate disclosures before recorded interviews

- Consent-based data collection

- Defined data retention policies

- Right to access, correction, and deletion

- No sale of personal data

- Secure cloud infrastructure

- Transparency around how AI insights are generated and used

Compliance is not a feature – it’s a foundation.

What Jobma Intentionally Avoids

Designing ethical AI is not only about what you build, but what you choose not to build. In a market where some platforms emphasize increasingly complex predictive analytics, Jobma makes intentional tradeoffs. The platform does not prioritize:

- Fully automated hiring decisions

- Opaque scores without explanation

- Irreversible automated rejection mechanisms

- Unexplainable behavioral forecasting models

While advanced predictive modelling can offer analytical in-depth, Jobma’s focus is on transparency, usability, and structured human oversight.

Faster, Compliant Hiring without Losing Context

When AI is designed to support human judgment rather than replace it, organizations gain resilience, consistency, and trust at scale.

The outcomes that define success:

Hiring Decisions You Can Stand Behind: Hiring decisions must be explainable, given the current regulatory climate. Human-centered AI strengthens defensibility by embedding structure into every stage of evaluation: standardized interview questions, consistent competency-based scoring, time-stamped transcripts, and documented reviewer feedback.

Instead of relying on memory or subjective impressions, hiring teams operate with documented evidence. If decisions are questioned by candidates, regulators, or internal stakeholders, the rationale is documented and reviewable.

Increased Candidate Trust: When candidates know they are being evaluated on their merits and that a human being is ultimately reviewing their story, they are more likely to engage authentically. Transparency reduces the “interview anxiety” associated with being judged by a machine. A structured yet human process builds confidence in the employer.

Stronger Recruiter Adoption & Confidence: Adoption is one of the most overlooked variables when it comes to hiring tech. If recruiters don’t trust a system, they either ignore it or rely on it blindly. Neither is sustainable. Assistive AI works because it stays in a supportive role. It supports organization and documentation without overriding professional judgment. Recruiters remain accountable, and their expertise matters.

Reduced Algorithmic Bias: By keeping AI in a supportive role as an “assistive” tool rather than a decision-maker, we avoid the trap of “encoded bias.” These tools focus on job-related competencies such as communication clarity or skill alignment, rather than unexplainable personality traits. Standardized workflows and structured rubrics further reduce variability across interviewers.

Lower Regulatory and Reputational Risk: As laws governing employment-related AI expand, organizations must anticipate compliance challenges before they arise. Human-centered AI reduces exposure by avoiding fully automated employment decisions, preserving human review layers, maintaining documented audit trails, embedding privacy and consent mechanisms, and limiting reliance on opaque predictive scoring.

Protection of Employer Brand: Companies that prioritize “the human element” are seen as more empathetic and ethical. In a competitive talent market, being known as a responsible and ethical employer influences acceptance rates and long-term perception.

Faster Hiring without Sacrificing Oversight: Efficiency remains essential, especially for high-volume or distributed hiring teams. Automating time-consuming administrative work, such as organizing interview data, generating summaries, maintaining documentation, and streamlining panel review, reduces friction. Recruiters spend less time on coordination and more time on engagement and strategic decision-making.

AI as a Collaborator, Not a Replacement

Hiring is the most “human” thing a company does; it is the process of building culture and community. When you choose a human-centred AI in hiring approach, you keep people in control, embed compliance into design, improve fairness, and strengthen recruiter capability.

Human-centered AI is not about limiting technology; it’s about aligning technology with responsibility.